Drones in 2026 are smarter than ever. Thanks to computer vision, they no longer rely on rigid paths - they analyze surroundings in real time to navigate, avoid obstacles, and operate independently. Industries like mining, urban deliveries, and disaster response are embracing these advancements, with the AI-powered drone market projected to triple from $20.2 billion in 2025 to $61.6 billion by 2032.

Key upgrades in 2026 include:

- AutoFly Model: Improved navigation accuracy by 3.9% using advanced depth-aware features.

- Edge AI Processing: Eliminates latency by handling data onboard, crucial for remote areas.

- Vision-Language Models: Enables drones to handle complex commands like "inspect the power line" autonomously.

- SLAM and Sensor Fusion: Boosts navigation in GPS-deprived zones like mines and warehouses.

These advancements are transforming industries:

- Delivery: Amazon drones now complete deliveries in under an hour using Beyond Visual Line of Sight (BVLOS) tech.

- Inspections: Drones detect structural issues in real time, reducing risks for humans.

- Search and Rescue: Thermal imaging paired with AI identifies survivors in tough conditions.

Computer vision is the backbone of this evolution, enabling drones to "see" and make decisions like human pilots. From warehouses to disaster zones, this tech is reshaping how drones work.

GPS-Denied, Anti-Jam Autonomous DIY Drone: How It Works

sbb-itb-ac6e058

Core Computer Vision Technologies for Drones

Computer Vision Technologies in Drone Navigation: CNNs, SLAM, and Visual Odometry Comparison

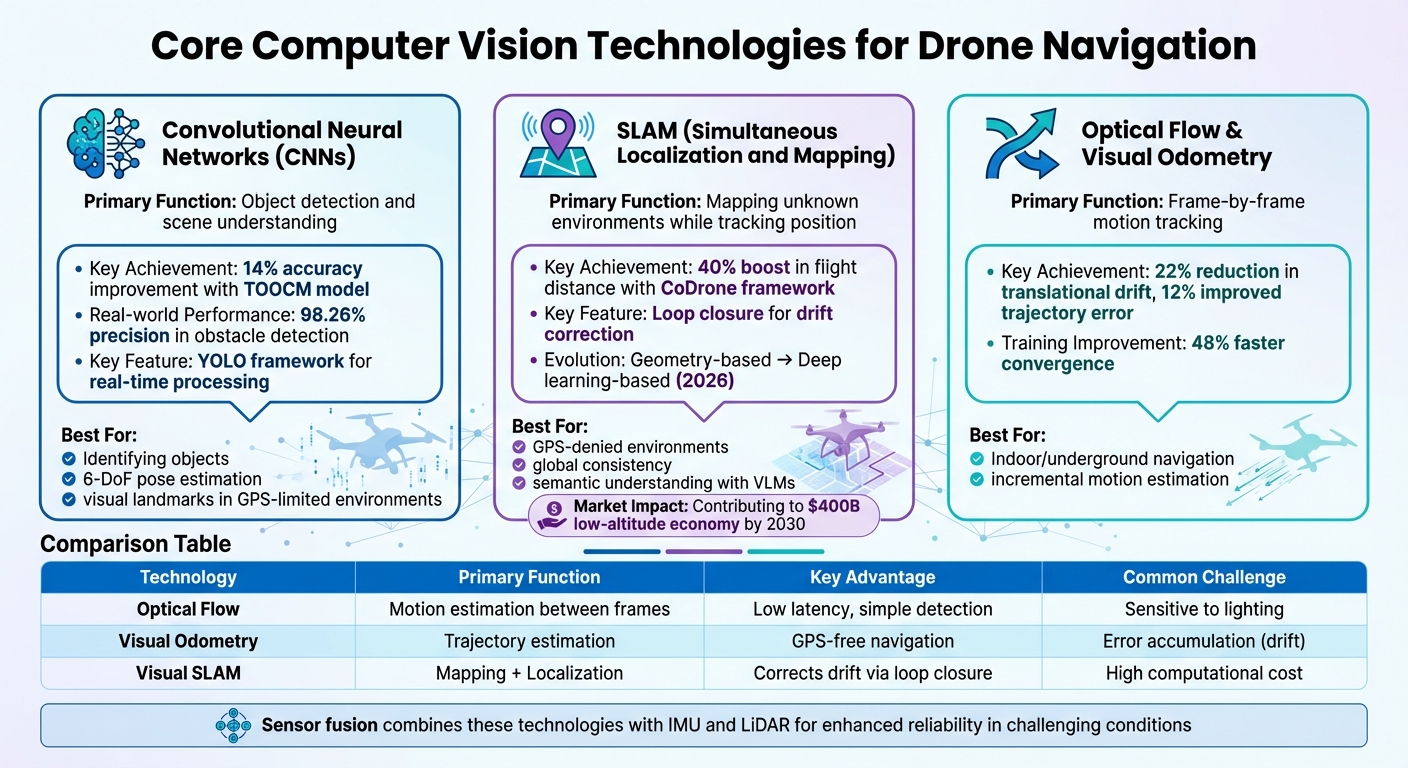

The advancements achieved in 2026 have significantly shaped how drones use computer vision. By combining Convolutional Neural Networks (CNNs), Simultaneous Localization and Mapping (SLAM), and visual odometry, drones can now detect objects, map environments, and track motion with remarkable precision. These technologies form the backbone of modern drone capabilities.

Convolutional Neural Networks (CNNs)

CNNs play a central role in enabling drones to detect objects and understand their surroundings. Through multiple layers, they automatically learn visual features, making them indispensable for identifying complex objects. A standout framework, YOLO (You Only Look Once), processes entire frames at once, ensuring drones can navigate in real time.

Advanced models like TOOCM (Texture-variant Obstacle Object Classification Model) have pushed detection accuracy up by 14% in environments filled with obstacles. Additionally, CNNs empower drones with 6-degree-of-freedom (6-DoF) pose estimation, allowing them to pinpoint an object's position and orientation. This is especially useful for tasks like inspecting power poles or maneuvering through tight spaces.

In GPS-limited environments such as mines or warehouses, CNNs extract visual landmarks from camera feeds, ensuring reliable navigation. Real-world applications highlight these capabilities: CNN-based inspection systems achieve a 98.26% precision rate in detecting obstacles like potholes in real time, and modern segmentation models can isolate objects like power lines from dense vegetation. This strong foundation in object recognition sets the stage for effective mapping and localization.

Simultaneous Localization and Mapping (SLAM)

SLAM addresses the challenge of navigating unknown environments by building maps while estimating the drone's position and orientation simultaneously. A key feature of SLAM is loop closure, where the system recognizes previously visited locations to correct positional drift. This ensures accuracy over time, even in complex environments.

By 2026, SLAM evolved from geometry-based approaches to deep learning-based pipelines that handle dynamic elements and challenging imaging conditions.

"Visual SLAM's performance is subject to diverse environmental challenges, such as dynamic elements in outdoor environments, harsh imaging conditions in underwater environments, or blurriness in high-speed setups"

Modern SLAM systems also utilize cloud-edge-end collaboration to overcome the processing limitations of onboard systems. For instance, the CoDrone framework, developed in late 2025, combines the Depth Anything V2 model on edge servers with the Qwen-VL-Max Vision-Language Model in the cloud. Tests in the AirSim simulation environment showed a 40% boost in average flight distance and a 5% improvement in navigation quality metrics. By offloading complex tasks to the cloud, drones can focus on real-time control while resolving ambiguities that simpler onboard systems struggle with.

The latest advancement, Semantic SLAM, incorporates Vision-Language Models to enhance scene understanding. This allows drones to go beyond recognizing objects - they can understand their context and how to interact with them.

"The introduction of VLM enhances open-set reasoning capabilities in complex and previously unseen scenarios"

With technologies like SLAM driving growth, the low-altitude economy is projected to reach $400 billion by 2030.

Optical Flow and Visual Odometry

While SLAM ensures global consistency, optical flow and visual odometry focus on tracking motion incrementally.

Visual Odometry (VO) estimates a drone's 3D trajectory by analyzing consecutive frames, while Optical Flow measures the apparent motion of brightness patterns between frames. Together, these methods enable drones to navigate without GPS, making them ideal for indoor, underground, or urban environments where satellite signals are weak or unavailable.

VO calculates incremental motion frame by frame, while SLAM maintains global consistency by correcting drift over time. In 2024, researchers developed a real-time monocular visual odometry model combining CNNs, LSTM networks, and attention modules. This model reduced translational drift by 22%, improved trajectory error by 12%, and sped up training convergence by 48% during testing.

Deep learning has further streamlined these pipelines, reducing computational loads and minimizing errors.

| Technology | Primary Function | Key Advantage | Common Challenge |

|---|---|---|---|

| Optical Flow | Motion estimation between frames | Low latency, simple motion detection | Sensitive to lighting changes |

| Visual Odometry | Trajectory estimation | Enables GPS-free navigation | Error accumulation (drift) |

| Visual SLAM | Mapping and Localization | Corrects drift via loop closure | High computational cost |

To enhance reliability, sensor fusion combines visual data with inputs from IMUs or LiDAR. This ensures accurate localization even in environments with poor lighting or sparse visual features.

Applications of Computer Vision in Drone Navigation

The technologies discussed earlier are now powering real-world drone applications across various industries. From inspecting infrastructure to delivering critical medical supplies, computer vision has become the backbone of autonomous drone operations in 2026.

Industrial Inspections and Mapping

With advanced computer vision algorithms, drones are performing detailed industrial inspections, even in areas where GPS is unavailable. These drones can detect structural issues like corrosion, cracks, and hotspots on energy infrastructure in real time, thanks to onboard Edge AI that eliminates the need for cloud connectivity.

In August 2024, researchers Rowan Border and Maurice Fallon showcased the Osprey system, which autonomously mapped three industrial sites covering 2,528 m² (about 27,200 sq ft) in just 112 minutes. Using LiDAR-based SLAM and "Next Best View" planning, the system created true-color maps and NeRF reconstructions for structural inspections. Similarly, ETH Zurich introduced a vision-based system in 2025 that allows drones to autonomously track power lines, leveraging real-time perception and trajectory planning to navigate utility assets safely.

"Drones are no longer just flying cameras, as they've become strategic assets for enterprises that gather, analyze, and act on visual data at scale."

- Yaroslav Mota, Head of Engineering Excellence, N-iX

Construction companies are now feeding drone-captured data directly into Building Information Modeling (BIM) systems, automatically identifying deviations from digital plans. For ongoing monitoring, tools like Anvil Labs integrate 3D models, LiDAR point clouds, thermal imagery, and orthomosaics, enabling teams to annotate findings and share insights seamlessly. These advancements are paving the way for drones to play a critical role in emergency response and logistics.

Search and Rescue Operations

Computer vision enables drones to navigate complex, GPS-unreliable environments by analyzing surroundings and avoiding obstacles in real time. During wide-area searches, computer vision processes thousands of images to identify targets quickly.

In low-visibility conditions like smoke or darkness, thermal imaging paired with computer vision detects heat signatures from survivors. This technology has fueled growth in the AI-powered drone market, projected to rise from $20.2 billion in 2025 to over $61.6 billion by 2032, with emergency response being a major driver.

Delivery and Logistics

Delivery drones equipped with computer vision can detect obstacles in real time, enabling Beyond Visual Line of Sight (BVLOS) operations - essential for scaling long-distance services.

Between 2020 and 2021, Wing (Alphabet) drones delivered over 10,000 coffees in Logan, Australia, using AI to safely land in small, designated spaces. Amazon Prime Air's MK30 drones, equipped with advanced AI and "detect and avoid" technology, have completed thousands of deliveries for packages up to five pounds, all within an hour, under FAA-approved BVLOS operations.

Urban environments, where satellite signals can be obstructed by skyscrapers, present unique challenges. Here, drones rely on a combination of computer vision and inertial measurement units (IMUs) to navigate autonomously. Using LiDAR, they measure ground distances and detect obstacles, ensuring accurate and safe package delivery. In a groundbreaking example of medical logistics, MissionGO used an autonomous drone to deliver a donor kidney, showcasing the potential of computer vision-guided systems for urgent transplant operations.

In warehouses, drones equipped with computer vision streamline inventory management by navigating tight aisles and scanning barcodes or RFID tags. This has reduced inventory cycle times from weeks to mere hours. These developments highlight how computer vision is reshaping industries in profound ways.

Integrating Computer Vision with Advanced Platforms

Recent advancements in computer vision have paved the way for seamless integration with cutting-edge platforms, creating a robust ecosystem for industrial applications. By combining computer vision with sensors, AI processors, and data platforms, drones can now operate effectively in a variety of challenging environments.

Sensor Fusion for Improved Accuracy

Bringing together data from optical, thermal, and LiDAR sensors has significantly improved navigation precision. Each type of sensor contributes unique strengths: optical cameras capture detailed visuals, thermal imaging detects heat patterns even in poor visibility, and LiDAR provides accurate distance measurements for 3D mapping.

In 2023, researchers Nathaniel Simon and Anirudha Majumdar showcased the power of sensor fusion with MonoNav, a system designed for a 37-gram Crazyflie micro-aerial vehicle. Using just a monocular camera and optical odometry, the drone navigated cluttered indoor spaces at 0.5 m/s. By combining monocular depth estimation with path-planning algorithms, MonoNav reduced collision rates by four times compared to earlier methods. Even small drones can achieve precise 3D mapping by integrating visual and inertial data.

For industrial use, advanced instance segmentation models allow drones to identify individual objects within a 3D scene. This means drones can distinguish specific components like power lines, bolts, or equipment parts, enabling detailed spatial analysis and better obstacle avoidance.

Using 3D Models and Spatial Analysis

Once drones collect visual data, that information must flow into platforms capable of processing, storing, and sharing it. Computer vision automates this pipeline, turning captured images into high-fidelity 3D digital twins. These digital replicas are instrumental for tasks like structural health monitoring and tracking construction progress in real time against Building Information Modeling (BIM) systems.

Platforms such as Anvil Labs streamline this process by hosting diverse data types - 3D models, LiDAR point clouds, thermal imagery, and orthomosaics - in a unified environment. Teams can annotate findings directly on 3D models, perform measurements, and securely share insights across devices. This integration bridges the gap between data collection and actionable decisions, enabling construction managers to quickly identify discrepancies between drone-captured data and digital plans.

The adoption of digital twins is reshaping maintenance practices. Instead of reacting to failures, companies now rely on drone-captured 3D models for predictive maintenance, identifying potential issues before they escalate. This shift requires a seamless connection between computer vision algorithms that detect anomalies and platforms that track changes, correlate findings with asset databases, and automatically trigger work orders. With these integrations in place, fast onboard processing becomes critical for real-world deployment.

Real-Time Processing and Edge AI

The ability of a drone to process visual data in real time directly impacts its ability to navigate safely. Edge AI architectures, which handle processing onboard the drone instead of relying on the cloud, enable split-second reactions essential for avoiding obstacles.

"The real impact of an AI drone depends on how quickly it processes data. With edge computing, drone computer vision can analyze visual data onboard without relying on cloud connectivity."

- Yaroslav Mota, Head of Engineering Excellence, N-iX

NVIDIA Jetson has become a leader in onboard processing for drones, running lightweight models optimized for on-device analysis. This reduces latency and energy use, ensuring reliable performance even in remote locations like underground mines, dense forests, or isolated infrastructure sites.

In July 2025, researchers Qianzhong Chen and Mac Schwager introduced GRaD-Nav, a framework combining 3D Gaussian Splatting with deep reinforcement learning. This system achieved zero-shot sim-to-real transfer, allowing drones to navigate through gates in various environments with no additional training. Such advancements in edge AI enable drones to adapt instantly to new settings, making them practical for a wide range of industrial operations.

Conclusion

Key Takeaways

Computer vision has transformed drones into highly capable tools for making autonomous decisions, even in tough and unpredictable environments. Technologies like SLAM, CNNs, and sensor fusion now allow drones to navigate and operate safely, even in areas without GPS access.

The YOLO framework has become a standout in object detection, featuring in over 39.5% of research studies thanks to its speed and efficiency. At the same time, Edge AI architectures have made it possible for drones to process visual data directly onboard, delivering reaction times in milliseconds without needing constant cloud connectivity.

For successful deployment, integrating the right hardware with strong MLOps practices is essential. This ensures that models stay accurate across varying conditions and climates, addressing challenges like model drift. With the global market for drone vision-based navigation projected to grow at a yearly rate of over 20% through 2030, the potential for these technologies is immense.

These advancements are reshaping how industries operate, offering more efficient and safer solutions.

Impact on Industrial Sectors

The adoption of computer vision in drones is driving a shift from reactive maintenance to predictive maintenance, improving safety and operational efficiency. For example, these drones can detect issues like corrosion, cracks, or thermal irregularities before they lead to critical failures, minimizing human exposure to dangerous environments such as high-voltage areas or confined spaces. In warehouses, drones equipped with computer vision have drastically reduced inventory cycle counts - from weeks to just hours - for major companies like IKEA, Walmart, and Amazon.

"Drones now serve as strategic assets for gathering, analyzing, and acting on visual data." - Yaroslav Mota, Head of Engineering Excellence, N-iX

Platforms like Anvil Labs make it easier to turn collected data into actionable insights. They host 3D models, LiDAR point clouds, and thermal imagery in unified environments, where teams can annotate, measure, and even automate work orders. As regulations for Beyond Visual Line of Sight (BVLOS) operations continue to expand, drones equipped with computer vision will increasingly handle tasks like automated "detect-and-avoid" systems. This opens the door to round-the-clock autonomous monitoring across a wide range of industries.

FAQs

What hardware do I need for onboard (edge) computer vision on a drone?

For onboard computer vision, you'll need a companion computer or an edge AI device that can process visual data in real time. Popular choices include Linux-based systems like the UP Core, NVIDIA Jetson Orin Nano, or specialized cameras like the OAK series. These devices manage tasks such as obstacle avoidance, localization, and object detection. The right hardware will depend on your drone's specific computational requirements, power constraints, and the objectives of your mission.

When should I use SLAM vs visual odometry for GPS-denied navigation?

When your drone needs to map and localize in real-time - especially in environments where GPS isn’t an option or where surroundings are complex and constantly changing - SLAM is the go-to choice. It’s perfect for generating precise maps while enabling the drone to navigate with a clear understanding of its surroundings.

On the other hand, if you’re looking for a simpler, more affordable solution for motion estimation, visual odometry is a solid option. It performs well in well-lit, textured areas, such as indoors or spaces with plenty of visual features. However, keep in mind that it’s less focused on mapping and better suited for environments with minimal complexity.

How do I keep drone vision models accurate over time (MLOps and model drift)?

To keep drone vision models performing well over time, it's crucial to focus on consistent monitoring and upkeep. Regularly check performance metrics to catch any unnoticed drops in accuracy or shifts in data patterns. Set up a feedback loop to keep an eye on real-world data, spot changes, and prompt model updates as needed. Periodically recalibrating sensors is also important to minimize errors and maintain precise data collection. Following a well-organized MLOps framework ensures the model stays dependable and effective in the long run.