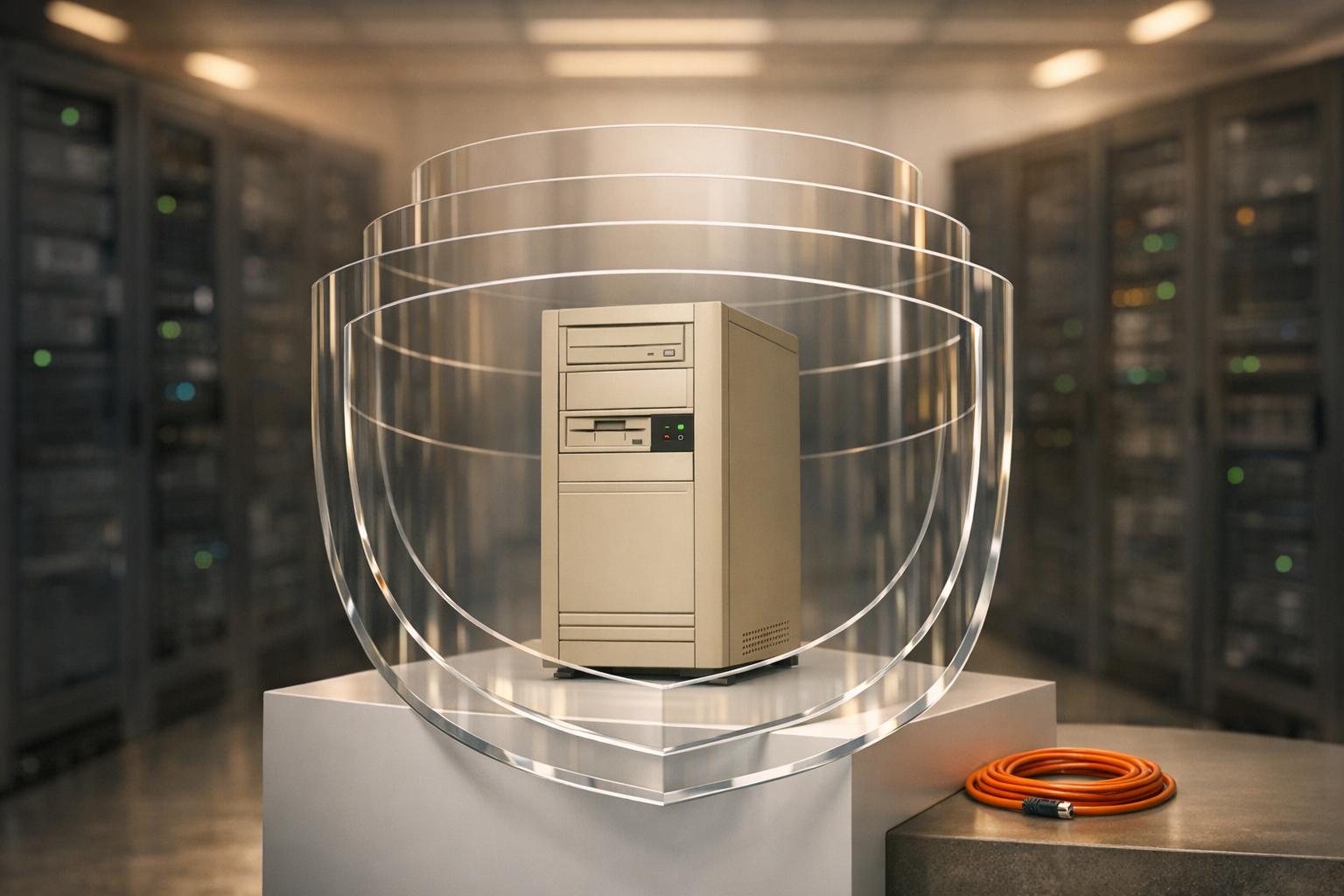

Legacy systems are often the weakest link during disaster recovery. Built on outdated technology, they lack modern security features like encryption and multi-factor authentication. This makes them prime targets for attackers, especially during recovery when vulnerabilities are exposed. Key risks include:

- Data Breaches: Over 60% of breaches involve legacy systems with poor access controls.

- Vendor Support Gaps: Many legacy platforms no longer receive updates, leaving security holes unpatched.

- Hidden Dependencies: Undocumented connections between systems can cause cascading failures during recovery.

To safeguard your legacy systems, focus on these steps:

- Audit for Vulnerabilities: Create an inventory of all legacy components and use tools like Nessus for scanning.

- Secure Backups: Encrypt data, use automated backups, and test recovery processes quarterly.

- Implement Access Controls: Limit admin privileges, enforce MFA, and use role-based access control (RBAC).

- Monitor for Threats: Deploy intrusion detection systems (IDS) and centralize logs in a secure location.

- Isolate Risky Systems: Use network segmentation to shield legacy systems from broader threats.

Legacy systems are challenging to secure, but with proper encryption, monitoring, and recovery planning, you can minimize risks and protect critical data during disasters.

5-Step Framework for Securing Legacy Systems During Disaster Recovery

Finding Security Weaknesses in Legacy Systems

Before diving into recovery strategies, it’s critical to evaluate legacy systems for vulnerabilities. Without addressing these weak points, you risk leaving your systems open to exploitation during a crisis.

Running a Security Audit

Start by creating an inventory of every legacy component, noting details like version, configuration, and network location. Use this data to generate a Software Bill of Materials (SBOM), which can help identify any unsupported third-party dependencies. For vulnerability scanning, rely on trusted tools like Nessus or Qualys to automate the process. However, since legacy systems often include custom code that automated tools might miss, supplement these scans with SAST or manual code reviews to catch hidden issues.

To better understand risks, apply formal risk frameworks like the NIST Risk Management Framework. This approach can help you identify specific threats, such as open ports, outdated APIs, or services that should’ve been disabled long ago.

"Legacy applications often introduce significant security risks... the application no longer receives patching or vendor support. This drastically increases the risk of vulnerability in the application being left in an exploitable state." - OWASP

Go beyond software dependencies by mapping out critical staff roles. Losing key personnel could derail recovery efforts if their expertise is essential and overlooked.

Checking Backup and Recovery Capabilities

Test your backups against your Recovery Time Objective (RTO) and Recovery Point Objective (RPO) to ensure they meet your organization’s needs. Automate backups - daily incremental and weekly full backups are a good standard - to minimize the risk of human error. Ensure data is encrypted both at rest and in transit using strong protocols like AES-256, and apply least-privilege access principles to limit exposure. For example, Azure Backup’s soft delete feature offers a safeguard by allowing recovery of deleted backups for up to 14 days.

"When attackers compromise machines, they often make significant changes to configurations and software. Sometimes attackers also make subtle alterations of data stored on compromised machines, potentially jeopardizing organizational effectiveness with polluted information." - CIS (Center for Internet Security)

Regularly test your ability to restore data - ideally, once a quarter. Use a random sampling of assets to confirm that restored data is functional, uncorrupted, and authentic. Don’t just focus on data; include system configurations, software, and any dependencies needed to rebuild the entire legacy environment.

Reviewing Vendor Support and System Compatibility

Legacy systems often struggle to integrate with modern security tools. They might produce data in proprietary formats that modern Security Information and Event Management (SIEM) systems can’t process. Many still rely on outdated encryption methods or even plain text protocols, exposing them to risks during data transmission. Limited logging capabilities can further complicate integration into centralized monitoring frameworks. Some systems even require obsolete browsers like Internet Explorer 11, which no longer receive security updates.

"The longer legacy IT remains in organisations' environments, the harder it will become to find staff with the technical skills to operate and support it." - Australian Signals Directorate

Document these compatibility challenges thoroughly. For systems that need modern features like MFA or updated identity solutions, you may need external integrations - such as jump servers or network boundaries. Custom APIs or automation scripts can also help convert legacy logs into formats compatible with modern tools. If your systems require outdated operating environments, consider using virtual environments to enhance logging and detection capabilities. Finally, isolate these systems within restricted subnets using routers and firewalls to limit their potential as attack vectors.

Once vulnerabilities are mapped out, the next step is to implement stronger encryption and access controls, ensuring these systems are better protected moving forward.

sbb-itb-ac6e058

Setting Up Encryption and Access Controls

After identifying vulnerabilities, the next step is to protect your data with modern encryption techniques and access control measures. This is especially important for legacy systems, which often require additional safeguards to address the weaknesses uncovered during assessments.

Encrypting Data at Rest and in Transit

Encryption is your first line of defense, ensuring that even if storage is compromised, the data remains unreadable to unauthorized users. For older systems like Microsoft SQL Server, Transparent Data Encryption (TDE) can encrypt data at the file level without requiring application code changes. When transferring backups to the cloud, secure them with a user-defined passphrase for added protection.

A smarter approach to encryption is using envelope encryption. Here’s how it works: encrypt your database with a Data Encryption Key (DEK), then protect that DEK with a Key Encryption Key (KEK) stored in a secure vault like HashiCorp Vault. This vault automatically encrypts data using a 256-bit AES cipher in Galois Counter Mode. Similarly, Azure Backup ensures all backup data at rest is encrypted with 256-bit AES encryption.

"Protecting data at rest, like protecting data in transit, requires a defense in-depth approach to ensure your sensitive data is not accessible unless a person or system is authorized to access the data." - HashiCorp

When moving data, always use Transport Layer Security (TLS) or HTTPS. In hybrid setups, where legacy systems connect to cloud storage, apply client-side encryption so data is protected before leaving your environment. To maintain consistency, automate encryption settings across all storage targets using Infrastructure as Code (IaC) templates. Also, enable features like Soft-Delete and Purge Protection in key management services. For example, AWS KMS enforces a mandatory 7–30 day waiting period before permanently deleting a key, giving you time to cancel any unauthorized actions.

| Encryption Type | Legacy Application Method | Cloud Recovery Method |

|---|---|---|

| At Rest | PGP for files, TDE for SQL Server | Server-Side Encryption (SSE), AES-256 |

| In Transit | Client-side encryption before upload | Private Endpoints |

| Key Management | Passphrases or local HSMs | Cloud KMS, Vaults, KEK/DEK wrapping |

To strengthen these encryption measures, pair them with robust authentication protocols.

Using Multi-Factor Authentication (MFA)

Multi-Factor Authentication (MFA) is a powerful tool, blocking 99.9% of account compromises. For legacy systems that lack built-in support for modern authentication, you can implement an identity abstraction layer. This layer bridges the gap between the application and authentication policies, allowing you to add MFA without altering the original code.

"Identity Orchestration uses an abstraction layer called an identity fabric that sits between the application and the policies that govern authentication... without touching the application itself." - Aldo Pietropaolo, Strata.io

Connect older tools to modern MFA providers using protocols like RADIUS or OAuth 2.0. Opt for phishing-resistant options such as FIDO2 security keys and biometrics, which are far more secure than SMS or voice-based methods prone to interception or SIM swapping. MFA should be mandatory for high-stakes actions like deleting backups, altering retention policies, or accessing encryption keys.

For emergencies, establish "break glass" accounts for admin tasks if your primary MFA system fails during a disaster. Store master passwords and recovery keys in secure physical safes at two separate locations, requiring multiple people to access them. Roll out MFA gradually, starting with high-value accounts like those of administrators and senior management, before expanding to all users.

MFA is just one piece of the puzzle. Another critical step is limiting administrative access.

Restricting Administrative Privileges

Excessive administrative access poses a huge risk, especially during recovery. Shift from broad admin rights to Role-Based Access Control (RBAC), granting only the permissions necessary for specific recovery tasks. Separate critical operations - like deleting backups or altering retention policies - from routine administrative duties to minimize single points of failure.

"The security textbook solution – immediate enforcement of least privilege – would have disrupted patient care during critical situations." - Ayo Akinsanya, CISSP, CC

To transition smoothly, adopt a hybrid model where new identity management systems coexist with legacy credentials, gradually reducing privileges for the latter. Before making changes, analyze network traffic and consult experienced staff to identify hidden dependencies on administrative access. For legacy systems that lack granular permission settings, use compensating controls like network segmentation, enhanced logging, and virtual patching. Move shared or hardcoded admin credentials into secure secrets managers or vaults. For recovery tasks, use dynamic credentials that expire once the task is complete.

To further secure your backups, store them in an isolated account - a "data bunker" - with strict access requirements tailored to recovery scenarios. Regularly audit user permissions to identify and remove unnecessary access rights that accumulate over time (privilege creep).

With these encryption and access control measures in place, you’re better equipped to protect your data and systems. The next step is to focus on building a resilient backup and recovery strategy that can handle both operational failures and security threats.

Building Better Backup and Recovery Strategies

After implementing robust encryption and access controls, the next step is to focus on creating dependable backup systems. These backups should remain secure and functional even during disasters. Older systems are particularly at risk, as their backup tools often lack modern protections. To ensure smooth operations, set up automated backups and routinely test recovery processes.

Using Immutable Backups

Immutable backups are a key defense against ransomware since they cannot be altered once created. Using Write Once, Read Many (WORM) technology, these backups remain locked until their retention period ends - even administrators can’t modify or delete them. This is vital, as 89% of organizations have faced attacks targeting their backup systems.

Follow the 3-2-1-1-0 Rule:

- Keep three copies of your data.

- Use two different storage media.

- Store one copy off-site and one offline or immutable.

- Ensure zero errors with regular recovery tests.

For older systems, configure retention locking to prevent administrators from shortening retention periods. For instance, Veeam offers a minimum immutability period of 7 days, with options extending up to 9,999 days for long-term compliance. Cloud platforms like Azure Blob Storage with Object Lock and Google Cloud Backup Vaults provide immutability without requiring physical WORM hardware. Azure Backup also includes a "soft delete" feature that retains deleted backups for 14 days, safeguarding against accidental or malicious deletions.

To further secure backups, use separate administrator credentials for backup environments. This limits unauthorized access. When legal cases involve legacy systems, "Compliance Holds" can suspend expiration rules for specific backups indefinitely.

Once immutability is in place, focus on automating backup schedules to protect legacy systems effectively.

Scheduling and Automating Regular Backups

Automating backups ensures consistency and reliability. Schedule these processes during off-peak hours to minimize performance disruptions. For older databases, use application-consistent backups - these temporarily pause workloads to ensure restored data is usable.

Incremental backups, which capture only changes since the last backup, are especially helpful for reducing the strain on older hardware. Tailor your backup frequency to your Recovery Point Objective (RPO). For example:

- Critical systems like ERP or billing platforms might need backups every 15 minutes.

- File shares may only require daily backups.

Here’s a quick reference for backup strategies:

| System Type | RPO (Data Loss Limit) | RTO (Downtime Limit) | Recommended Strategy |

|---|---|---|---|

| Core ERP / Billing | 15 minutes | 2 hours | Frequent snapshots with automated replication |

| Email / Collaboration | 4 hours | 8 hours | Multiple automated backups each day |

| File Shares | 24 hours | 24 hours | Nightly automated incremental backups |

| HR / Internal Systems | 24 hours | 48 hours | Weekly full backups with daily incrementals |

For on-premises systems, consider using a Storage Gateway to connect local data with cloud-based backups. If modern backup tools aren’t available for legacy systems, rely on CLI, SDKs, or third-party solutions to maintain a consistent schedule. Set up alerts to catch failures immediately. For large initial backups, shipping physical storage devices to data centers can help avoid network congestion.

Testing Recovery Procedures

Testing recovery processes regularly ensures that backups work when needed. For critical systems, conduct formal restore tests quarterly; for less critical systems, aim for annual tests. Don’t just trust the "green checkmark" in backup software - verify that restored data is usable and applications function as expected.

"Backups that have never been restored are a theory, not a control."

– Andrii Demchenko, Imagis

Restore backups in isolated environments to avoid disrupting production systems. Before starting, establish clear validation criteria - check data types, formats, checksums, and file sizes for accuracy. Functional tests are also essential to confirm that users can log in, databases return correct results, and applications run smoothly.

Measure the time it takes to restore and fully operationalize applications to ensure compliance with your Recovery Time Objective (RTO). Document every detail of the test, including job IDs, results, screenshots, and any issues. Address any gaps with a remediation plan. Tools like AWS Lambda can help automate restore workflows, reducing the chance of human error.

Finally, simulate various failure scenarios - such as losing a single file, a virtual machine, or an entire server - to prepare for different types of disasters. Regular testing strengthens your disaster recovery readiness.

Monitoring and Detecting Intrusions During Recovery

Recovering from a disaster often opens up a window of vulnerability. Malware can linger undetected on networks for anywhere from 70 to 200 days before causing visible damage. For organizations relying on older systems, identifying a cybersecurity breach could take as long as 18 months. These risks highlight the importance of constant monitoring to protect recovery efforts, especially when legacy systems are involved.

Deploying Intrusion Detection Systems (IDS)

Older systems without built-in logging capabilities require external tools to monitor network traffic and capture error logs.

To safeguard evidence, forward all IDS and IPS logs to a remote, centralized Security Information and Event Management (SIEM) system immediately. This prevents attackers from tampering with locally stored logs on compromised machines. Make sure legacy systems, especially those connected to the internet or holding critical data, are prioritized in your logging strategy.

An example of how monitoring can catch threats: since mid-2021, Volt Typhoon, a known threat actor, has targeted critical infrastructure using "Living Off the Land" (LOTL) techniques. They’ve used PowerShell commands like Get-EventLog security –instanceid 4624 to identify user accounts and vssadmin to create volume shadow copies for extracting Active Directory databases (NTDS.dit). While these actions use legitimate tools, they leave specific traces in logs. A well-configured SIEM can detect these anomalies by comparing them to normal activity patterns.

| Logging Priority | Asset Type | Rationale |

|---|---|---|

| High | Internet-facing services | Most likely targets for initial exploitation |

| High | Identity/Domain Management | Crucial for detecting lateral movements |

| Medium | Legacy IT Assets | High vulnerability due to outdated security |

| Medium | Administrative Workstations | Key targets for credential theft |

| Low | Underlying Infrastructure | Includes hypervisors and network components |

To streamline log correlation, standardize timestamps across systems using Coordinated Universal Time (UTC) in ISO 8601 format (e.g., 2024-07-25T20:54:59.649Z).

Setting Up Continuous Log Monitoring

Centralize logs from all sources in a secure location to prevent tampering, and use at least two different types of audit devices for redundancy.

Configure endpoints to send logs directly to a remote SIEM and enable immutable storage during recovery to preserve forensic evidence. Be on the lookout for unusual patterns, like spikes in failed login attempts, unauthorized root-level access, "permission denied" errors, or changes to logging configurations. Most organizations keep logs accessible for three to twelve months for quick analysis, while compliance requirements may necessitate retention for up to seven years. To manage storage costs without compromising availability, implement automated lifecycle management for your logs.

Monitor the health of your logging systems by tracking disk space, input/output performance, and failure metrics like dropped log requests. For accuracy, synchronize event timestamps across systems using at least three time sources. Protect log data during transmission with Transport Layer Security (TLS) 1.3 and cryptographic verification. Adopting the Open Cybersecurity Schema Framework (OCSF) can simplify data standardization, eliminating complex extraction and conversion processes.

"Deficiencies in security logging and analysis allow attackers to hide their location, malicious software, and activities on victim machines." - CIS Control 6

Once real-time monitoring is in place, the next step is isolating the most at-risk systems.

Isolating Vulnerable Systems

Beyond monitoring, isolating high-risk systems is essential to limit exposure during recovery. Network segmentation can shield critical assets from compromised legacy systems. Use virtual LANs (VLANs) and next-generation firewalls to separate legacy systems from public-facing segments. Implement a zero-trust approach to contain infections and isolate sensitive data.

For added protection, block all inbound traffic to isolated systems and tightly control outbound communication. Use a proxy to mediate communication between isolated and public networks, ensuring no direct connections are allowed. Secure these communications with HTTPS tunnels using mutual TLS 1.3 to guard against spoofing and man-in-the-middle attacks.

When systems are not actively involved in recovery or replication, disconnect them physically or logically to minimize their exposure. Automating air gaps with virtual machine power management can help by shutting down systems when not in use. For secure data restoration, create private endpoints within a Virtual Network, keeping recovery operations away from the public internet.

Backup and storage systems should remain hardened and completely separate from the corporate network to protect the recovery environment from attackers. Use scripts and workflows to define blackout periods, during which no administrative actions or data transfers are permitted. This ensures resources are effectively air-gapped during those intervals.

Creating Incident Response Plans for Legacy Systems

After monitoring and isolating potential threats, it's time to prepare your team for incident response. A staggering 91% of organizations have faced tech-related business disruptions in the last two years. Legacy systems, with their outdated documentation and limited vendor support, often complicate recovery efforts. By building on monitoring and isolation practices, these steps can help ensure a fast and organized response.

Setting Up Clear Incident Protocols

Start with a Business Impact Analysis (BIA) to identify which legacy systems are critical and establish key recovery metrics like Maximum Tolerable Downtime (MTD), Recovery Time Objective (RTO), and Recovery Point Objective (RPO). For example, if a legacy manufacturing system has an MTD of four hours and an RTO of two hours, your backup plan must align with this tight timeline.

Form a Cybersecurity Incident Response Team (CSIRT), led by an Incident Response Lead (IRL), and include representatives from security operations, legal, privacy, HR, marketing, and law enforcement contacts. To streamline recovery, create a risk classification matrix to prioritize incidents by severity. For legacy systems, include an appendix with hardware/software inventories, architectural diagrams, and interconnection maps - these resources clarify dependencies and speed up recovery efforts. These protocols, combined with encryption and backup strategies, provide a solid foundation for protecting legacy systems.

Activation triggers should be clearly defined. With over 23% of security exposures tied to critical IT infrastructure, such as administrative login pages accessible online, protocols must outline who to notify, how to communicate (e.g., using conference bridges if primary systems fail), and the initial steps team members need to take within the first 30 minutes of identifying an incident.

Running Recovery Simulations

Regular tabletop exercises help team members understand their roles and reveal potential gaps in expertise, particularly for legacy systems that may lack knowledgeable staff. These sessions should include not only security teams but also business leaders and developers.

"Security should be considered everyone's job. Build collective knowledge of the incident management process by involving all personnel that normally operate your workloads."

- AWS Well-Architected Framework

Take it further with functional exercises to test real restoration processes in a simulated environment. Use actual production data and involve experts familiar with legacy systems to uncover compatibility issues with modern recovery tools.

After every simulation or real-world incident, compile an After Action Report (AAR). This report should document successes, failures, and areas for improvement. Track performance using metrics like Mean Time to Detect (MTTD), Mean Time to Acknowledge (MTTA), Mean Time to Contain (MTTC), and Mean Time to Recovery (MTTR). Review and update your incident response plan annually or whenever significant changes occur in your legacy system environment.

These simulations not only refine your response strategies but also enhance communication with stakeholders when an actual incident occurs.

Communicating with Stakeholders

Attackers can escalate from initial access to data theft in just 72 minutes, making rapid communication essential. Develop communication templates that specify tools, update frequency, and target audiences to keep everyone aligned during high-pressure recovery phases.

"What happens in the first minutes after initial access can determine whether an incident becomes a breach."

- Sam Rubin, Vice President, Unit 42

Use a RACI matrix (Responsible, Accountable, Consulted, and Informed) to assign clear roles for communication during incidents. Legacy system incidents often require translating technical jargon into plain language for stakeholders. Notify your legal team immediately if customer data is compromised, and keep executives updated on recovery progress and business impact.

Don't overlook regulatory requirements. With 57.8% of professionals anticipating more complex data protection rules in the future, your plan should include templates for notifying regulatory bodies within required timeframes. Identify which legacy systems handle regulated data and outline specific procedures for breaches involving those systems. This ensures compliance while maintaining transparency with stakeholders during a crisis.

Conclusion

Protecting legacy systems during disaster recovery calls for a layered approach that combines audits, encryption, reliable backups, monitoring, and a swift response plan. While backups act as the last safeguard, their effectiveness hinges on proper key management, immutability, and verified recovery processes.

"Backups are the last line of defense when protecting your data from malicious activities, but might prove worthless if recovery is not possible due to incomplete backups or backups that are not valid."

- AWS Documentation

Start by conducting detailed vulnerability assessments to identify weak points. Use encryption with strong key management to ensure sensitive data stays secure. Strengthen backups by making them immutable and storing them in isolated environments to shield them from ransomware attacks.

Beyond that, continuous monitoring is essential. This helps detect unauthorized changes - such as tampering with backup policies or deletion attempts - before they escalate into larger issues. Pair this with an incident response plan that includes regular recovery drills and clear communication protocols. These steps help ensure your team can meet Recovery Time Objectives (RTO) and Recovery Point Objectives (RPO) under pressure.

For legacy systems, where recovery failures can have serious consequences, these measures are non-negotiable. Together, they provide a robust framework to safeguard critical data and maintain operational continuity during disasters. By integrating these practices, you can build a defense that keeps your legacy systems secure, even in the face of unexpected challenges.

FAQs

How do I add MFA to a legacy app without changing its code?

You can implement MFA for a legacy application without touching its code by using a no-code MFA proxy. This proxy works as a middleman, adding MFA to the login process without requiring changes to the application's source code. Simply configure the proxy to connect with your chosen MFA provider and integrate it into your current setup. This approach boosts security for your legacy system without the hassle of code modifications.

What backup setup best protects legacy data from ransomware?

The best way to safeguard legacy data from ransomware is to establish immutable, recoverable backups. These backups should be stored in a location separate from the primary system, making it harder for attackers to access or tamper with them. To ensure reliability, it's essential to test these backups regularly, confirming that the data can be restored without issues when needed.

How can I monitor legacy systems that can’t send modern logs?

To keep an eye on legacy systems that don't support modern logging, consider using centralized log collection methods. Options like network-based monitoring or specialized log collectors can help gather data effectively. Make sure to forward these logs to secure, off-site repositories or SIEM platforms with encryption and strict access controls in place.

It's also important to set up clear event logging policies. These should outline what events need to be logged, how to monitor them, and how long to retain the data. This approach ensures you maintain visibility and meet compliance requirements, even when dealing with older systems that lack advanced logging features.